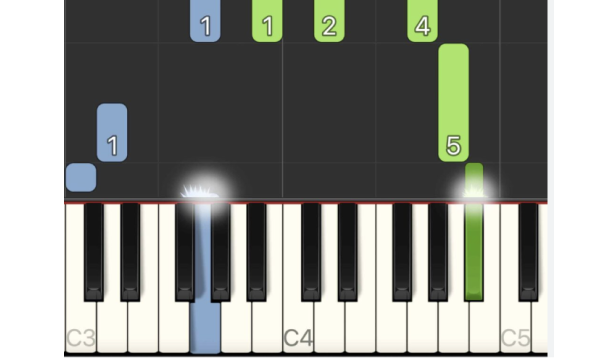

There are a lot of piano “tutorial” videos out there, but most of them aren’t step-by-step walkthroughs. They mostly look like falling raindrops that light up piano keys. These tutorials are often made with a tool called Synthesia.

I’ve struggled with these videos because I can’t keep up with the falling notes or figure out the chords I need to play. It’s up to you to keep track of the light bars.

For example, I wanted to learn a Telugu song called Samayama, but it only came in video tutorial format.

So I built PianoReader. PianoReader is a web tool that watches piano tutorial videos and spits out the notes and chords. As a bonus, it does it all in the browser without extra server compute.

It takes in a tutorial video and outputs piano tablature that looks like this:

|Left Hand | Right Hand |

| -------- | ---------- |

| A Maj | A D |

| A Maj | A |

| D min | D |

How it works

I knew this problem was going to involve some level of computer vision. It needed some kind of classifier or bounding box detection to make it work.

Alternatively, I considered training a model. But I didn’t want to sit and label hundreds of videos to predict notes. I mean, if I did the work to label just one video, I wouldn’t even have to build this :)

HTML Canvas

HTML canvas is a cheap and client-first option to do low-cost video processing. No server needed, just browser.

Canvas ships with a convenient way to pull in video frames. On every frame of the video, you can hook into it with a callback:

const canvas = document.getElementById("myCanvas");

const ctx = canvas.getContext("2d");

const video = document.getElementById("video1"); // Get your video element

const drawVideoToCanvas = () => {

// do some work here with the frame

ctx.getImageData([]);

// Re-register the callback to be notified about the next frame

video.requestVideoFrameCallback(drawVideoToCanvas);

};

// Start the drawing loop when the video starts

video.requestVideoFrameCallback(drawVideoToCanvas);

Detecting Piano Keys

Before I could detect the notes pressed, I had to determine the piano key placement in a video.

Different kinds of virtual pianos in tutorials

Every piano in a video has different spacing, colors, and start positions. The easiest way to determine the piano location was by asking the user to tell me where the keys were.

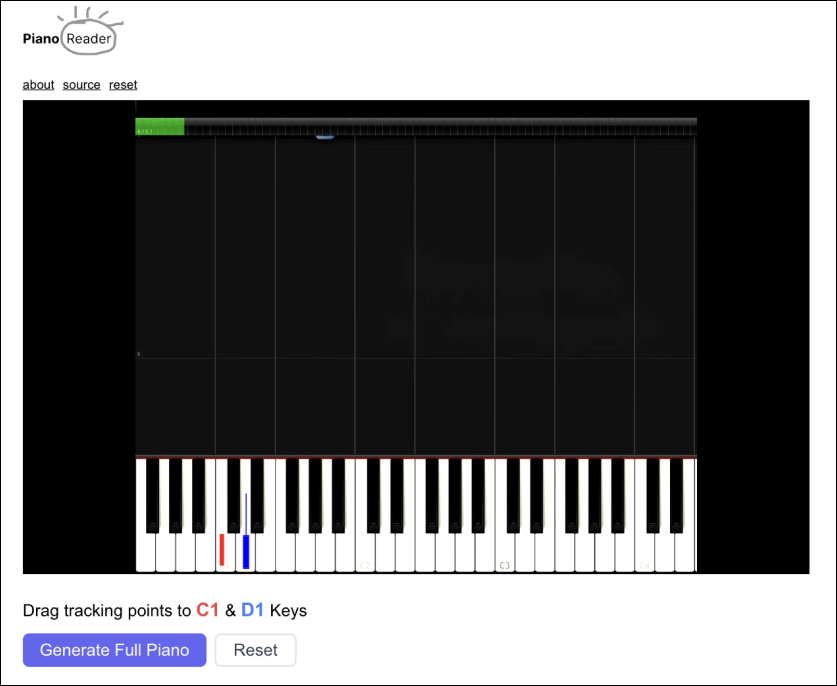

Step 1 is to label the C1 and D1 piano keys

I was also surprised to know that every piano key is not spaced equally. This drove me bonkers trying to generate the rest of the piano keys. All of the lines wouldn’t align perfectly.

Luckily, the tutorial I wanted to run only used the white keys, so I didn’t worry about the sharps in this project.

To make the video canvas interactive, I used Fabric.js. Fabric gave an easy way to make a drag-drop canvas. In my case, I had users position two fabric rectangles over the first keys and then generated the rest of the piano.

Detecting Notes

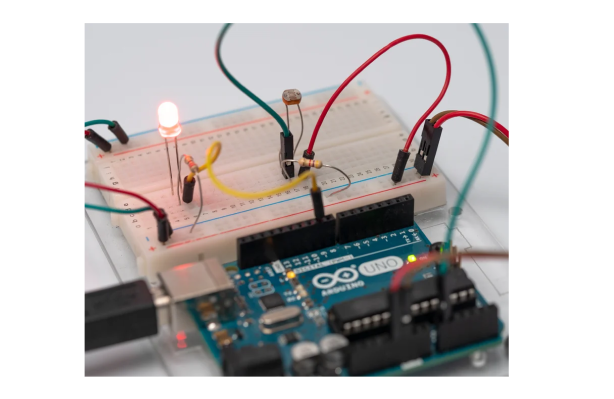

If I was building this with an Arduino, I would have used something like a photoresistor to detect if a key was pressed.

Demonstration of an Arduino light sensor

I would then place this “tracker” on every single key of the piano to get the keys pressed at a certain frame. I did something similar virtually by sampling the key regions on my canvas.

// iterate through the visible piano keys on the video

const keysPressedInFrame = [];

for (const key of pianoKeys) {

// get the hex code at a key location

const hex = ctx.getImageData(x, y, w, h);

// do some work to check if the key is lit up or not

const isKeyPressed = determineIfKeyIsPressed(hex);

if (isKeyPressed) keysPressedInFrame.push(key);

}

To accurately determine a key press, I applied a grayscale filter to the canvas to get the shade of the pressed area. After I figured out if a key was ON or OFF, I used tonal.js to pass in the set of pressed keys to get any chords it made.

Final Thoughts

The good news is that I did learn the song I wanted as a result of this tool.

Honestly, I wanted the tool to do a lot more, but some of the sub-problems were pretty heavy.

Load tutorials from Youtube

Ideally, I would have liked to drop a Youtube link and have it be analyzed. But one limitation of HTML Canvas for processing is the inability to pull in cross-origin videos. This causes the canvas to be tainted. So for this tool, you have to download the video before you can process it.

Slow processing

Because I have to process every frame in the video with a callback, the video has to run in its entirety to get all of the notes. If you speed up the video for faster processing, frames get dropped, the seconds get messed up, and the song sounds weird.

So for now, the tool isn’t without its limitations. It only works with the white keys. It may be off by a note. And sometimes it needs extra tuning. But hey, it works, and I learned a song from it.

Give it a try at pianoreader.app. Otherwise, the project is open sourced on Github. Happy learning!